Making a Robot Dog Stand: The Hidden Challenges Before Training Begins

Every reinforcement learning demo follows the same script: import robot, run training, show walking video. What nobody shows you is the hours spent before training even starts — getting a custom robot to simply stand upright without falling on its face.

This is the story of Angel D01, a custom 12-DOF quadruped robot I'm building from scratch. It weighs 6.65 kg, was designed in SolidWorks, and lives inside NVIDIA Isaac Lab for simulation. Before I could teach it to walk with RL, I needed to solve a deceptively hard problem: making it hold a stable standing pose under gravity, using nothing but PD control.

It sounds trivial. It isn't.

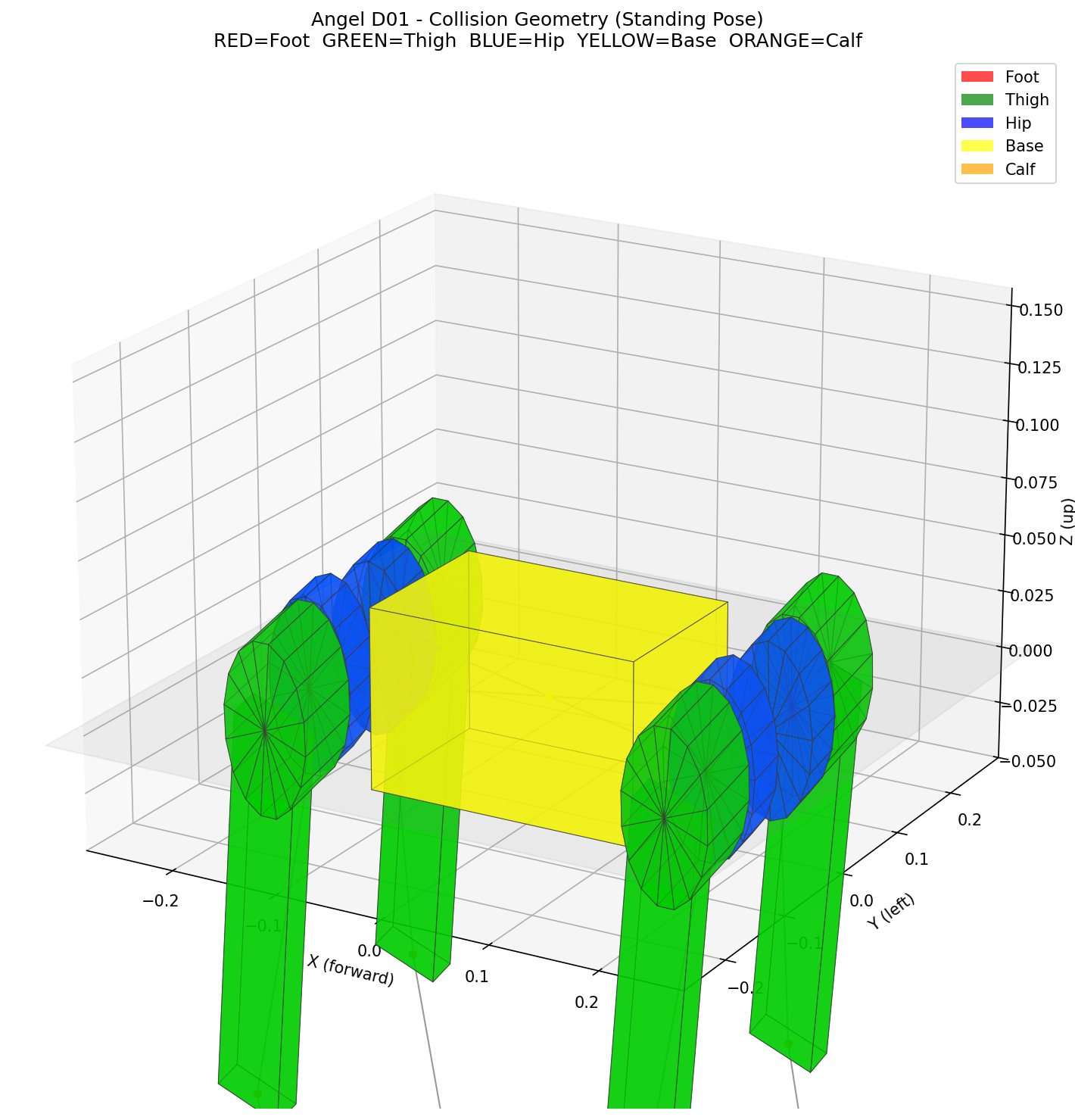

Angel D01 collision geometry in standing pose

Angel D01's collision geometry — the simplified shapes the physics engine actually simulates. Each color represents a different body part: base (yellow), hips (blue), thighs (green), calves (orange), and feet (red).

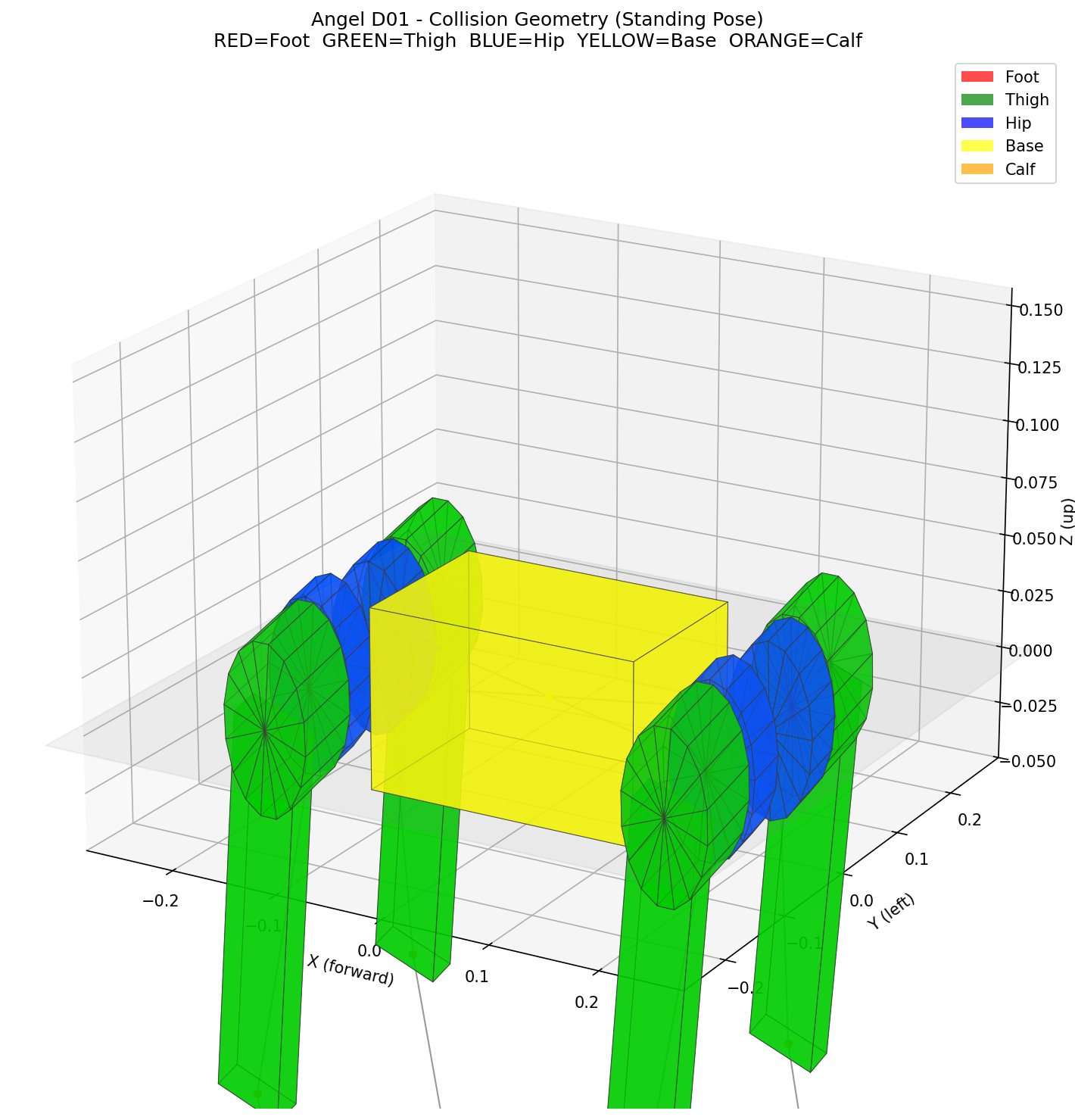

Angel D01 collision geometry in standing pose

Angel D01's collision geometry — the simplified shapes the physics engine actually simulates. Each color represents a different body part: base (yellow), hips (blue), thighs (green), calves (orange), and feet (red).

Finding the Perfect Stance

When I first spawned Angel D01 in simulation, it immediately pitched forward and collapsed. The robot's center of mass was sitting ahead of its support polygon — the area between where the feet contact the ground. Gravity did the rest.

Center of mass and support polygon diagram

Left: when the CoM projection lands ahead of the support polygon's center, gravity creates a forward torque that the PD controller can't overcome. Right: centering the CoM over the support polygon produces a level, stable stance.

Center of mass and support polygon diagram

Left: when the CoM projection lands ahead of the support polygon's center, gravity creates a forward torque that the PD controller can't overcome. Right: centering the CoM over the support polygon produces a level, stable stance.

This is a problem that doesn't exist with off-the-shelf robots. Their default poses have been carefully calibrated by the manufacturer. When you build your own robot, you're starting from a CAD model and a URDF file — there's no one to tell you what the "right" joint angles are.

My approach was to work the problem from both ends. First, I wrote a forward kinematics script to understand how each joint angle maps to foot position. This immediately revealed something subtle about the URDF: the front and rear hip flexion joints rotate in opposite directions. A positive angle that pushes the front thigh forward pushes the rear thigh backward. Using the same value for all legs — the obvious first attempt — created a wildly asymmetric pose with nearly 17 cm of height difference between front and rear.

With the FK relationships understood, I set up a sweep of 7 pose candidates. Each variant used a different hip-knee angle combination, all designed to produce roughly the same foot spread but with different weight distributions. I spawned all 7 in simulation simultaneously, let them settle under gravity for a few seconds, and measured the steady-state pitch.

Pose sweep results showing pitch for 7 different joint angle combinations

Seven pose variants simulated simultaneously. The sweet spot (HFE=0.50, KFE=0.88) achieves just -1.7° of pitch — nearly perfectly level. More crouched poses (left) push the feet backward, shifting the support polygon behind the CoM. Too upright (right) and the knees can't absorb enough.

Pose sweep results showing pitch for 7 different joint angle combinations

Seven pose variants simulated simultaneously. The sweet spot (HFE=0.50, KFE=0.88) achieves just -1.7° of pitch — nearly perfectly level. More crouched poses (left) push the feet backward, shifting the support polygon behind the CoM. Too upright (right) and the knees can't absorb enough.

The winner was clear: hip flexion at 0.50 rad with knee flexion at 0.88 rad, producing just -1.7° of pitch. More crouched poses (larger hip angles) pushed the feet backward, moving the support polygon behind the CoM and causing forward pitch. Going too upright reduced the knees' ability to absorb disturbances.

Here's the final standing pose configuration:

joint_pos = {

".*FL_HAA": 0.1, ".*FR_HAA": -0.1, # Hip abduction

".*RL_HAA": 0.1, ".*RR_HAA": -0.1,

".*FL_HFE": 0.5, ".*FR_HFE": 0.5, # Hip flexion (front)

".*RL_HFE": -0.5, ".*RR_HFE": -0.5, # Hip flexion (rear, opposite sign!)

".*_KFE": 0.88, # Knee flexion (all legs)

}The Drop Test

Finding the right pose was only half the battle. The other half was making sure the PD controller — the low-level joint controller that tries to hold each joint at its target angle — was tuned correctly for this robot's mass.

I set up a side-by-side drop test: Angel D01 next to a well-known 16 kg commercial quadruped. Both robots spawned at their default poses and held position with PD control only — no learned policy, no balance controller, just springs and dampers.

The commercial robot uses PD gains of Kp=25 (stiffness) and Kd=0.5 (damping) for its 16 kg body. When I initially used those same gains on Angel D01, something was clearly wrong. The legs behaved like rigid rods — they wouldn't flex or yield naturally. The math explains why: PD torque scales with stiffness, but gravitational torque scales with mass. At 6.65 kg with Kp=25, the torque-to-gravity ratio was 4.26x — over four times stiffer than intended. The robot's joints were so stiff that it couldn't develop a natural gait during later RL training. It would just stand still.

The fix was mass-proportional scaling:

With properly scaled gains, Angel D01 settled at -1.7° of pitch. The commercial robot, despite its mature engineering, settled at -11.4°. A lighter robot with correctly tuned gains can actually achieve a more level stance — the lower mass means less gravitational sag at the joints.

This gain scaling turned out to be the single most impactful change in the entire project — more important than any reward function tuning. With Kp=25, later RL training plateaued at mediocre performance. With Kp=10, the same training setup produced a robot that actually walks.

What Comes Next

Angel D01 now holds a stable standing pose under gravity with nothing but PD control. No neural network, no balance algorithm — just the right joint angles and properly scaled gains. This is the invisible foundation that makes everything else possible.

In the next post, we'll add a neural network and see if it can learn to walk — reward shaping, domain randomization pitfalls, and why a 40x penalty weight difference can be the difference between a robot that walks and one that just stands still.